“We need to find a path forward for life after Moore’s Law,” Nvidia CEO Jen-Hsun Huang said at the beginning of his annual GPU Technology Conference keynote. But Nvidia isn’t hesitant to throw around more iron to make its ferocious graphics processors even more so, as evidenced by the reveal of the first product based on Nvidia’s badass next-gen Volta GPU.

Nvidia’s high-end “Pascal” processors still rule the graphics roost, though AMD’s rival Radeon Vega GPUs are scheduled to launch before the end of June. Volta helps Nvidia take some of the wind out of AMD’s sails before Vega even hits the streets, even if the Tesla V100 GPU is focused on data centers.

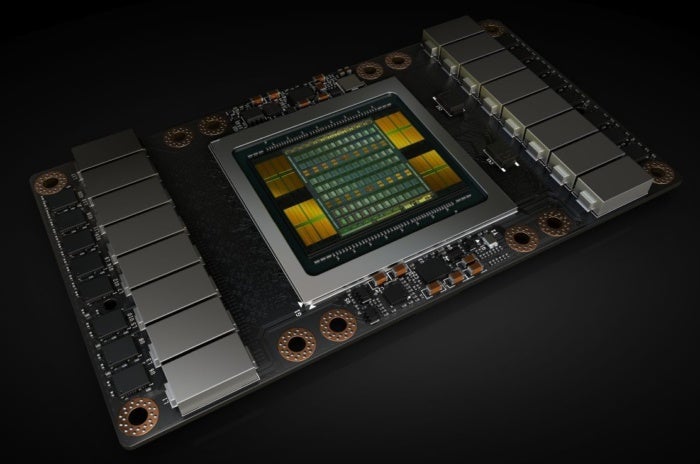

This beastly GPU—both in size and capabilities—boasts a whopping 21 billion transistors and 5,120 CUDA cores humming along at 1,455MHz boost clock speeds, all built using a 12-nanometer manufacturing process more advanced than that of Nvidia’s current GPUs. By comparison, today’s Pascal GPU flagship, the 14nm Tesla P100, offers 3,840 CUDA cores and 15 billion transistors. The GeForce GTX 1060 has a quarter as many CUDA cores as the Tesla V100, at 1,280.

To fit all that tech, the Volta GPU in the Tesla V100 measures a borderline ridiculous 815mm square, compared to the Tesla P100’s 600mm GPU. Monstrous.

Nvidia

Nvidia Nvidia’s Volta-based Tesla V100.

Volta is “at the limits of photolithography,” Huang said with a smirk, created using an R&D budget of over $3 billion.

Nvidia says it’s redesigned Volta’s streaming microprocessor architecture to be 50 percent more efficient than Pascal’s, which is damned impressive if it proves true. That enables “major boosts in FP32 and FP64 performance in the same power envelope,” Nvidia says. The Tesla V100 also includes new “tensor cores” built specifically for deep learning, providing 12 times the teraflops throughput of the Pascal-based Tesla P100, Huang said. (Google’s also invested in tensor processing hardware.)

The Tesla V100 hits a peak of:

- 7.5 TFLOP/s of double precision floating-point (FP64) performance;

- 15 TFLOP/s of single precision (FP32) performance;

- 120 Tensor TFLOP/s of mixed-precision matrix-multiply-and-accumulate.

Nvidia

Nvidia Seriously, the Volta GPU inside the Tesla V100 is MASSIVE.

The Tesla V100 utilizes 16GB of ultra-fast, 4096-bit high-bandwidth memory to process data quickly. It’s unknown whether Volta-based consumer graphics cards will feature HBM2, however. Radeon Vega does, but the tech is still relatively new and pricey. The GeForce GTX 10-series graphics cards debuted with new GDDR5X technology based on classic memory designs, and SK Hynix recently said that it’s “planning to mass produce the product for a client to release high-end graphics card by early 2018 equipped with high performance GDDR6 DRAMs.”

That HBM2 memory hits 900GB/s speeds, Nvidia says, and the Tesla V100 features a second-gen version of Nvidia’s NVLink technology. At 300GB/s transfer speeds, Huang claims NVLink is now 10 times faster than standard PCIe connections.

Nvidia

Nvidia Inside the Tesla V100.

If you want to know more about Volta’s datacenter and architecture details, be sure to check out Nvidia’s in-depth Tesla V100 explainer. Look for the Tesla V100 to launch in a revamped version of Nvidia’s pricey DGX-1 system in the third quarter, and more widely in the fourth quarter.

The impact on you at home: None, immediately. But this first glimpse at Volta gives us an idea of what Nvidia’s next-gen GeForce graphics cards will be capable of. Remember, Nvidia revealed its Pascal GPU at GTC 2016 in the form of the Tesla P100, and that full-fat version eventually trickled down into the Titan Xp, with the GeForce GTX 1080 Ti coming damned close. The Tesla V100’s extreme GPU size and strong machine learning focus skews that somewhat when it comes to Volta, though.

One more thing: The GeForce GTX 10-series launched a mere month after Pascal’s GTC reveal, about one year ago. Don’t necessarily expect Nvidia’s Volta-based GeForce cards to launch in the near future—especially if SK Hynix’s GDDR6 memory is indeed headed for Nvidia cards rather than next-gen Radeons.